Google's AI Overview is not ranking your page. It's choosing which pages to cite. If your rankings are holding and your traffic is falling, you're not getting beaten on SEO. You're getting skipped during source selection. The question of how to rank in AI Overviews is not the question you think it is. No amount of sharper headlines, tighter FAQ schema, or longer-tail keyword targeting fixes that. None of those are why Google's grounding layer chose someone else's passage instead of yours. The problem sits upstream, in decisions you made early or will spend months retrofitting.

What I Keep Seeing Across AI Overview Audits

The pattern I keep seeing across real client and site-review work: teams focus on page-level SEO changes, but the bigger visibility constraint is usually upstream. Weak entity consistency, thin topical support, and poor extractable structure make it harder for AI systems to reuse or cite the page, even when the page itself looks "optimized." The fix is a different build order.

Why Rankings Stopped Predicting Citations

Open your Search Console. Look at the last eighteen months. If you're like most sites tracking this closely, impressions are up, rankings are stable or improving, and clicks have quietly come apart from both. You didn't get worse. The selection process changed. The pages ranking at the top used to be the pages getting the traffic. In 2024 that stopped being reliably true, and the gap between ranking and citation is still widening.

Grow & Convert put it directly earlier this year: "rankings, traffic, and conversions have always grown together... But now we're seeing two metrics rising (rankings and conversions) while traffic falls." Kevin Indig's 70-user UX study was sharper: "Clicks are empty calories... Only 20% of the time does the AIO give participants the final answer. But 80% of the time they clicked on other results, mostly organic results."

The hard number from Fractl and Search Engine Land's 2025 survey of 800+ marketers: "Nearly 4 in 10 marketers (39%) report traffic losses since the rollout." And from Ahrefs: "the presence of an AI Overview in the search results correlated with a 34.5% lower average clickthrough rate (CTR) for the top-ranking page."

If that pattern matches yours, the problem often isn't your content.

How AI Overviews Actually Select Sources

AI Overviews don't rank pages. They select sources. When a user triggers an AI Overview, Google runs a retrieval process against its index, then a generation process that selects specific sources to ground the answer. The retrieval pool is smaller than the SERP. Sources are chosen based on how reusable the content is, meaning how easily Google's system can extract a clean, attributable passage to support a specific claim.

Ahrefs' September 2025 research made the gap visible: "the citation overlap between AI assistants and Google and Bing's top 10 stands at 11%... AI Overviews follow the SERPs, AI assistants don't." Inside Google's own AIO, only about 38% of cited pages rank in the organic top 10 for the same query. The rest come from elsewhere in the index, from YouTube, and from sources Google treats as authoritative at the entity level.

Three things tend to drive source selection, and all three are primarily architectural:

| Signal | What it measures | How to test |

|---|---|---|

| Entity match | Does your domain, author, or organization have a consistent, machine-readable identity in this subject area? | Pull JSON-LD across pages. Are Organization, Person, and methodology @id values identical? |

| Chunk extractability | Does each H2 section lead with a self-contained paragraph that answers the section's implied question? | Screenshot the first paragraph under each H2. Does it still answer the question in isolation? |

| Cross-platform signal | Does your entity appear across multiple platforms, or only on your own domain? | Search your brand name on YouTube, LinkedIn, and industry publications. Count the mentions. |

Aleyda Solís flagged chunk extractability directly: "With AI search this happens at a passage or chunk level of relevance." Ahrefs' updated citation study on cross-platform signal: "YouTube is the most cited domain in AI Overviews today, and has grown 34% over the last six months."

These are structural problems more than content problems. Good content still matters, but without the architecture, good content gets skipped.

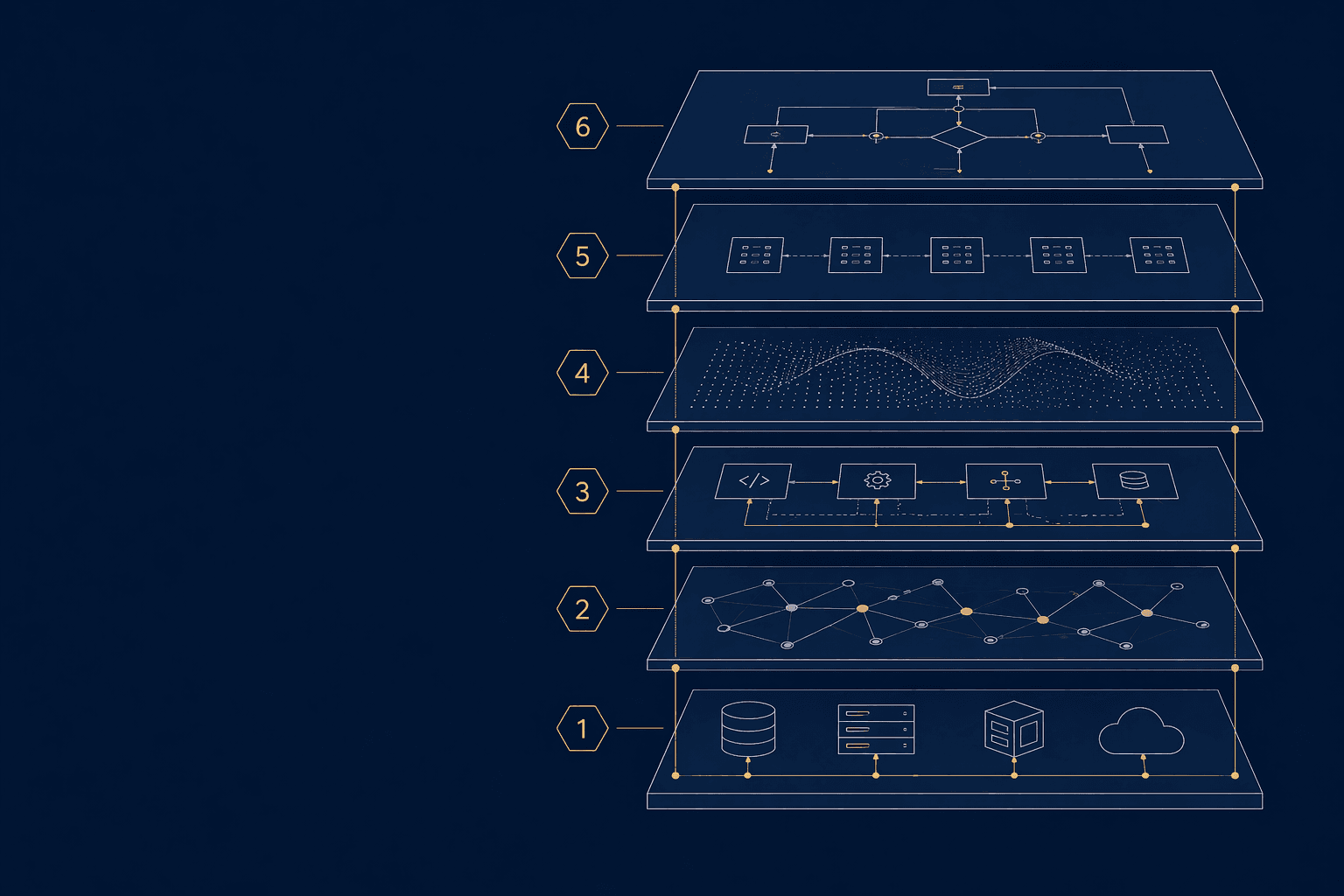

One clarification: this framework does not claim Google uses these six layers as direct ranking factors. It organizes the structural conditions that make a page easier for AI systems to identify, parse, trust, and cite.

The Six-Layer Framework for AI Overview Citation

Six layers govern how to rank in AI Overviews as a citation outcome, not a ranking outcome. They break into three pairs. Two foundation layers establish who you are as an entity and where you have topical authority. Two content layers determine the shape and scanability of the material. Two signal layers decide if your entity is visible beyond your own domain. Build them in that order. The dependency flows top-down, and skipping a step upstream makes every step below it harder.

Layer 1: Cluster depth

Topical authority isn't one page. It's a connected set of pages that collectively cover an entity space with enough depth that Google associates the domain with the subject. Cluster depth typically supports entity match. A single strong page surrounded by nothing else signals thin authority. A small set of interconnected pages on the same topic, pillar, supporting posts, internal linking, signals earned authority over time.

Layer 2: Entity consistency

Every reference to your organization, authors, and proprietary methodologies needs the same machine-readable identity across your entire site. Chunk-level relevance fails when the chunk references an entity whose identity drifts from page to page. Organization schema, Person schema, and DefinedTerm nodes should carry identical @id values site-wide.

Layer 3: Extractable chunks

Every H2 section should open with a self-contained paragraph that answers the section's implied question in isolation. Aim for roughly 60 to 100 words. If a user can screenshot only that paragraph and still get a useful answer, it's extractable. This is where most AI Overview guidance stops. The other five layers are what make this layer actually work.

Layer 4: Freshness signals

AI Overviews appear to favor content that signals recent review. ISO 8601 datetime with timezone in schema. Visible "last updated" dates. Content that references current conditions, not conditions three years ago.

Layer 5: Cross-platform presence

Ahrefs: "mentions on YouTube, in video titles, transcripts, and descriptions, are the strongest correlating factor with AI Overview visibility." If your entity only exists on your own domain, you're easier to overlook. LinkedIn, YouTube, and industry media mentions all compound.

Layer 6: Schema discipline

Schema is part of the retrieval map the AI layer uses to parse your content's structure. BlogPosting, Organization, Person, FAQPage, and DefinedTerm nodes in a single clean @graph, with consistent @id values, deployed through a method that preserves script content. Some visual builder widgets strip script tags silently, verify your schema renders in the page source before assuming it's live.

The Architecture-First Build Order

Each layer constrains the layers below it. Working upward through constraints is usually more expensive than working downward. Build order is a dependency relationship, not a preference. Fix Layer 2 after Layer 3 is already written, and you have to rewrite Layer 3 to match the new entity. Fix Layer 1 after the entity layer is locked, and the cluster points at a stale identity you have to either rebuild or live with.

Layer 2 needs Layer 1

Entity consistency is hard to leverage without cluster depth. A locked Person @id across three pages doesn't carry weight. The same @id across thirty interconnected pages does.

Layer 3 needs Layer 2

Extractable chunks reference entities. Writing chunks before locking entities means rewriting them later when the canonical identity changes.

Layer 6 needs everything upstream

Schema is the retrieval map for everything above it. Building schema against moving targets means fixing it repeatedly as Layers 1 through 5 stabilize.

Most teams do this in reverse. They schema-up first because schema feels like "the AI thing." They write extractable chunks second because that's what the optimization guides prescribe. They get to cluster depth and entity consistency months later, once individual page fixes stop moving the AI visibility needle. By that point, every earlier layer has to be rebuilt against decisions already shipped.

Retrofitting works. It is usually far more expensive and slower than building in order because each fix forces changes downstream. Tighten Layer 2 and you touch Layer 3 chunks that referenced the old entity, which touches Layer 6 schema that pointed to the old structure. Build in order or pay the dependency tax. There's no third option.

What Most SEO Teams Are Still Doing Wrong

Most SEO audits check for technical fundamentals: indexation, Core Web Vitals, meta tags, internal linking. These are necessary, but they do not surface the architectural questions that determine AI citation. Tactical audits were designed for organic ranking, not retrieval-layer citation, so the architectural gaps stay invisible even when every tactical item on the audit report is green.

The audit misses questions like these:

@id values match across every page on the site, or do Organization and Person schemas drift between templates?@id, or just as a byline with a name?@id matched across the domain, or just mentioned in copy?These aren't primarily quality problems. They're architectural gaps, and tactical audits often miss them because tactical audits were built for a different job.

The framework above is the diagnostic lens. The next section is the execution path. Audit the layers in order, fix the first weak layer before moving down, and re-measure citation visibility against the same query set monthly. That's the loop.

A 30-Day Architecture Diagnostic

You can run this audit on your own site in about four hours spread across a month. It doesn't require tooling beyond browser developer tools and Search Console. The point isn't the checklist. The point is that after Week 1 you'll have a clear, structural read on which layer is actually holding your site back, and the remaining three weeks will confirm or refine it. Most teams find the constraint is not where they assumed it was.

Cluster and Entity Audit

List your top 5 pages by organic traffic. For each, identify the topical cluster and count the supporting pages in it. Pull the JSON-LD and verify that Organization @id, Person @id, and methodology @id values are identical across all pages in the cluster. Flag drift. A formal technical SEO audit covers this end-to-end if you want a structured starting point.

Chunk Audit

Take the top 20 H2 sections across your top 5 pages. Read only the first paragraph under each H2. Does it answer the section's implied question in isolation? If you can screenshot just that paragraph and still understand what was asked and answered, it's extractable. Count your extractable ratio.

Cross-Platform Audit

Search your brand name on YouTube, LinkedIn, and three industry publications in your space. Count mentions. If the count is close to zero on any platform, you have a Layer 5 gap.

Schema and Freshness Audit

Confirm schema is deployed through a method that preserves script content. Check your page source to verify it's rendering. Verify datePublished and dateModified are ISO 8601 with timezone.

Why AI Overview Architecture Matters Now

The CTR loss on AIO-present SERPs is measurable at roughly 34.5% for top-ranking pages. Fractl/Search Engine Land's 2025 survey found 39% of marketers reported traffic losses. The trend isn't reversing, and the queries that don't trigger an AI Overview today may trigger one next quarter. The site you're building this year has to be architecturally ready for citation, beyond organically ready for ranking.

Citation inside an AI Overview carries brand value even without a click. Kevin Indig's research showed users remember brand names from AI Overview citations. The visibility game is shifting from "capture the click" to "earn the citation," and architecture is a meaningful part of earning it.

If your team is still auditing for page-level tactics when the constraint is architectural, you're optimizing the wrong layer. The six-layer framework is a specific application of architecture-first principles for AI Overview citation, complementary to Zero Page SEO, Rank Outlaw's broader methodology for AI search visibility.

Frequently Asked Questions About How to Rank in AI Overviews

How long does it take to see results in AI Overviews after fixing architectural issues?

Schema and entity consistency changes can take weeks to show in AI Overview behavior. Cross-platform signal buildup (Layer 5) usually takes longer.

Does fixing schema alone help?

Marginally. Schema (Layer 6) is a retrieval map, not the thing being mapped. If Layers 1 through 5 are weak, better schema just describes weak architecture more clearly.

Is this different from Generative Engine Optimization (GEO) or AI Engine Optimization (AEO)?

Those terms cover the same problem space. Most GEO/AEO advice stays at the tactics layer: formatting, schema, answer structure. The build-order framework sits below that layer and asks what needs to be true architecturally before tactics matter.

What if my site is small and I can't build cluster depth quickly?

Keep Layer 1 narrow. Build one tight cluster first rather than spreading thin across many topics. Lock entity consistency inside that cluster. Make those sections extractable. The build order applies, you're just starting with a smaller footprint.

Does AI Overview optimization help with organic ranking too?

The overlap is moderate. Many Layer 1-6 improvements help organic. But some optimizations that help AI citation (like cross-platform signal density) are neutral or weakly correlated with organic ranking. Treat them as related but distinct.

How do I measure AI citation rate?

Pick 6 target queries. Run them on ChatGPT, Perplexity, and Google AI Overviews at baseline, 30 days, and 90 days. Record which brands appear in the answer and which competitors show up.